Reaching for Self-efficacy through Learning Analytics: The Zenith project

Gilly Salmon [gSalmon@oes.com], Online Education Services, United Kingdom and Swinburne University of Technology, Australia, Shaghayegh Asgari [S.Asgari@liverpool.ac.uk], University of Liverpool, United Kingdom

Abstract

We report on a large pilot study (known as “Zenith”) of the implementation of Learning Analytics in a traditional campus-based university in the process of moving towards blended learning. The pilot had multiple objectives, but here we report on the initial insights gained from a grounded exploration of the students’ perspectives and experiences of the introduction of personal Learning Analytic (LAs) reports, for the first time. The intention of the reports were to provide rapid electronic feedback through daily visual reports to students. Feedback was provided to the project team throughout the semester and adjustments were made through immediate actions. The students’ responses provide an early exploratory grounded view of their experiences, which we report here for insights for those wishing to use Learning Analytics beyond the obvious “prediction” scenarios. Key emergent outcomes included handling complexity, the variety of stakeholders, the consequent need for extensive communication, support, data handling and ethical considerations as well as confidence in the data. We also explore the need to enable students to understand the purpose of student-facing Learning Analytics reports, and a variety of ways in which they may be experienced by differing cohorts of students.

Abstract in French

Nous rendons compte d’une vaste étude pilote (connue sous le nom de « Zenith ») sur la mise en œuvre de Learning Analytics (analyse des traces de l’apprentissage) dans une université traditionnelle en cours de transition vers un modèle hybride. L’étude globale avait plusieurs objectifs, mais le présent article fournit un commentaire portant sur les connaissances initiales tirées d’une exploration empirique des points de vue et des expériences des étudiants concernant l’introduction de rapports personnels de Learning Analytics pour la première fois. Les intentions étaient de fournir aux étudiants une rétroaction électronique rapide par le biais de rapports visuels numériques quotidiens. Des réactions et des indications ont été fournies à l’équipe du projet par les étudiants et les enseignants impliqués tout au long du semestre, et des ajustements ont été apportés par des actions immédiates. Les réponses des étudiants fournissent une vision ancrée de leurs expériences ainsi que des éclairages pour ceux qui souhaitent utiliser les Learning Analytics au-delà des scénarios habituels de « prédiction ». Les résultats clés émergeants comprennent la gestion de la complexité, la variété des parties prenantes, la nécessité d’importants efforts de communication, ainsi que les questions de soutien, de traitement des données, de considérations éthiques et de confiance dans les données. Nous explorons également la condition préalable pour que les étudiants comprennent le but des rapports Learning Analytics, et une variété de façons dont les Learning Analytics peuvent être vécus par différentes cohortes.

Key words: Learning Analytics, self-efficacy, learning innovation, student-centred, electronic feedback, personal visual data reports.

Learning Analytics in Higher Education

At the time of the pilot, Higher Education Institutions were operating in a continuously shifting context influenced by a variety of opportunities and challenges, including globalisation, changes to government funding and policy (Swain, 2018; Universities UK, 2015). However, perhaps creating the most interest and impact on education provision was digitalisation and the mantra of student-centeredness and learning experiences (Salmon, 2019). Most universities around the world are reconsidering the ways in which pedagogy can become more attuned to current and future generations’ preferences, values and attitudes to learning (Archambault, Wetzel, Foulger, & Kim Williams, 2010; Beetham & Sharpe, 2013; Kozinsky, 2017).

Businesses and public sectors were rapidly increasingly deploying big data as a tool to inform processes, strategies and enhance customer service and relationships (Siemens, 2013 update). However educational institutions and their academic staff have been somewhat slow to embrace this particular digital frontier (Costa, 2018; Daniel, 2017) probably due to ethical concerns and the necessary integrations of technologies (Sclater, 2017). The potential of data mining and the deployment of visual data to enhance students’ experiences continues to be mentioned as potentially powerful but needing research (De Laat & Prinsen, 2014; Sclater, 2017).

The research and practice of Learning Analytics (LAs) builds its foundations on plurality of disciplines, including data mining, business intelligence, machine learning, and web analytics (Siemens, 2013; Stewart, 2017). For this project “Zenith” we used the definition of Learning Analytics set out by the International Conference on Learning Analytics and Knowledge (LAK, 2011).

“the measurement, collection, analysis, and reporting of data about learners and their contexts, for the purposes of understanding and optimizing learning and the environments in which it occurs”

Claims for the impact of LAs include the improvement of decision making and hence organisational resource allocation, the creation of shared understandings of successes and challenges within the institution, the enablement of academic staff to adopt more innovative pedagogical approaches and the boosting of organisational productivity and effectiveness (Sclater, Peasgood, & Mullan, 2016).

There has been considerable interest in “at-risk learners” and the potential to identify them early and provide appropriate interventions to help them succeed (Ifenthaler, 2017; Sclater, 2017).

However, in this study, we viewed LAs not just as a tool for institutions and academic staff but also offering the potential to provide learners with additional insight and understanding of their own learning through more personal and relevant visual data leading to recommendations and suggestions to improve their academic performance (Sclater; 2017, pp.125-135; Siemens & Long, 2011). The project, named “Zenith” and explained below, was most interested in a focus on learners at the centre of the data experience (Chatti, Dyckhoff, Schroeder, & Thüs, 2012).

Aspirations for LAs at the University of Western Australia

The study of LAs reported here took place in the University of Western Australia (UWA). UWA is a sandstone university established in 1911 in Perth, Australia. UWA is part of the Group of Eight, a coalition of research-focussed universities in Australia. UWA has educated Nobel Prize winners, politicians, sportsmen, and notable CEOs (Jayasuriya, 2006; The University of Western Australia, n.d.).

From 2014, following an extensive collaborative consultation, the University launched its large-scale “Education Futures” initiative, which included special emphasis on the changes and drivers to new forms of future-facing educational provision in a traditional Higher Education campus setting. The Education Futures initiative underpinned the wider University objectives for the increase in student satisfaction ratings and in the utilization of educational technologies. Amongst a wide variety of innovations, the Centre for Education Futures set up a large project on the impact and potential of LAs within its blended learning developments. LAs had not been used in the university previously. The project was code named “Zenith” (Zenith project : Education at UWA : The University of Western Australia, n.d.).

At the time, most LAs implementation and studies were centred around predictive analysis (JISC, 2017; Jorno & Gynthner, 2018). However, the high calibre of students at UWA did not indicate that a priority was addressing risk and retention. Instead, that of major importance was designing a pilot study to explore and address students’ academic self-efficacy, and to inform learning design. Learning design was the focus of another major project in the university running concurrently with Zenith.

Following consultations with academic and professional staff, advisory boards, committees and students, the aspirations for LAs at UWA were articulated. The intent was to foster a greater sense of motivation and academic self-efficacy in UWA’s students, by providing them just-in-time feedback of their engagement, learning, choices and achievements in the digital learning environment. Furthermore, the aim was to simultaneously empower academics staff to use analytics and offer them reports designed to provide just-in-time feedback on their learning design.

As De Laat and Prinsen (2014; p.52) suggest, LAs can help students to reflect and make informed decisions as they track their achievements and digital interactions. Furthermore, this gives students an indication to preferred learning behaviours “… this will raise awareness and equip students with the kind of orientations necessary to meet the demands of the emerging open networked society”. In other words, LAs feedback could give students some “bearings” on where they are in their learning process, helping them to navigate in the future. It was this key idea that inspired the Zenith team.

The digital footprints that are track-able of interactivity within online learning environments is data which can be captured and fed back to the students to become actionable information. The visual representation of this data should to be easily accessible and intuitive to inform critical reflection on students’ learning processes (Echeverria et al, 2018). UWA anticipated that a tool of this kind could provide the opportunity for UWA students to make personal data-driven or evidence-based decisions in their learning context and through time. Hence, the Zenith project aimed to assist in empowering the students to take control of their learning process, to encourage critical self-reflection and to help them to realise their capabilities to regulate and adapt their learning strategies towards their academic goals.

The overall Zenith project included ethics and data guidelines development, communications and a full technical set up. The learning and teaching aspirations, together with the transparency of the project, helped to mitigate the “natural” risk-adverseness in the traditional university.

Self-efficacy and self-regulated learning in the context of LAs

The Zenith team was struck by the notion of the potential empowerment of learners by LAs. Hence, the Zenith team sought to position the student-facing aspects of the Zenith project in cycles of feedback and action.

Elias and MacDonald (2007; p.2519) defined Academic Self-efficacy using Bandura’s (1977) research as: “learners’ judgments about their ability to successfully achieve educational goals”.

Self-efficacy is an introspective, self-experience, about internal judgement and self-perception, rather than a deficiency in capabilities (Usher & Pajares, 2008). Students lacking in confidence in their capabilities to manage their learning are more likely to give up when faced with challenges and are less equipped to implement adaptive strategies (Usher & Pajares, 2008; p.460). Substantial research into self-efficacy has shown a strong relationship with a related construct: perceived control (Elias & MacDonald, 2007; Schunk, 1991; Zimmerman, 2000; 2002). An internal locus of control is defined by learners’ being intrinsically motivated and attributing their academic successes to their own abilities and efforts rather than to factors outside of themselves. Such students are more successful than those with an external locus of control. An external focus means students might attribute both success and failures to factors such as luck, fate or that a mark is reflective of whether a teacher likes them or not (Broadbent, 2016).

An ability to critically reflect and self-regulate learning is also identified as contributing to the achievement of educational goals. “Self-regulation is not a mental ability or an academic performance skill; rather it is the self- directive process by which learners transform their mental abilities into academic skills” (Zimmerman, 2002; p.65). These processes and skills include time management, metacognition, effort regulation, motivation and critical thinking – all of which are positively correlated with academic outcomes (Broadbent & Poon, 2015). Self-regulation is a key to academic success ( Broadbent & Poon, 2015), though “the belief in one’s self-regulatory capabilities, or their self-efficacy for self-regulated learning, is an important predictor of students’ successful use of …skills and strategies across academic domains” (Usher & Pajares, 2008; p.444).

There is also a growing movement often called the “quantified self”. Self-measured quantitative data provides insights into people’s lives that might otherwise not otherwise be available or usable. For example, Lupton (2016; p.68) discusses how, through data, individuals can acquire “self-knowledge through numbers” by using devices such as a Fitbit to constantly take readings and collect personal data sets. Others explore motivation and include self-quantification as one option to improve learning outcomes (Hamari et al, 2018).

Hence the Zenith project planned that providing a fertile environment which nurtured development in learning and promoted students’ self-efficacy skills and their capabilities to learn, might better enable students to become more effective in carrying out their learning processes. One of the ways was to offer students the means and tools reports to take more control of their learning through the LAs visual reports. To foster academic self-efficacy in the students, Zenith’s aim was to encourage self-reflective work by providing them with the means to observe some of their own and peers’ interactive behaviour and inspire them to take control, make adaptive changes and regulate their learning strategies to work towards their learning goals.

Zimmerman’s in-depth work and contribution to the understanding of learning and the self, elucidates that learning is an activity which students engage themselves within: it is a pro-active process rather than a bi-product or reaction that happens because of teaching (2002; p.65). A key element in this active process is feedback, “as it enables students to monitor their progress towards their learning goals and to adjust their strategies to attain these goals” (Butler & Winne, 1995). The Zenith team felt that the provision of personalised, fast and relevant LAs might provide students with a more solid and measured representation of their online study behaviours and actions and thus encourage them to reflect on and regulate their approaches to learning.

The Zenith project and study was therefore set up with the somewhat lofty aim of fostering self-efficacy in the UWA students and to promote the effectiveness of their capability and capacity to carry out the constructive steps for their learning process.

From these opportunities the Zenith sub-aims were developed:

- How the Zenith LAs visual reports might function to introduce and develop more self-efficacy in the University’s students through improved personal information, promote learning motivation and encourage students to seek appropriate relevant advice and support at the right time for them.

- How the Zenith LAs reports might function as an additional and fast feedback to students (conceived as a form of electronic mentoring or feedback).

Zenith Pilot 1 Overview

The Zenith Project was led by the Centre for Education Futures at UWA, in partnership with the Faculties to explore the benefits and opportunities through an evidence-based highly collaborative, institution-wide approach. The Zenith Project commenced in August 2016. Zenith was set up with seven streams of work including policy guidelines and ethics, technology enablement, capability and capacity building for staff and students, communications and action research. This paper reports on the action research for Zenith Pilot 1 from the perspective of the students’ experiences.

Consent and transparency were considered a critical issue since many key stakeholders had limited knowledge of LAs in practice. Guidelines for Zenith were developed very early in the project with the University’s Secretary and Policy team, and all stakeholders but most especially with students, based on existing use of student data, with further reference to the JISC Code of Practice (JISC, 2017). We felt it was very important that clear and transparent processes were in place and fully tested in practice, accommodating any new or emergent issues, to provide a base line for all future LAs projects in the University. The Ethics Permission for the research was similarly given high priority and was received in January 2017. We considered it a valuable by-property of the project that we could start to explain some of the complex issues around stewardship and legitimacy of the deployment of LAs to our students. And that they began to understand not only the potential power of data analytics but also everyone’s responsibilities around personal data, privacy and consent. Note that large cohorts were required so that all data was not only anonymous but could not be identified (Sclater, 2017; p.133).

The Pilot Zenith (2016-7) was designed to deploy digital data to enable students in accessing and understanding a frequent, visual, clear picture of their study progress, empowering them to reach out for support and offering them greater control over their learning experience. We also ensured that the best achievable blended learning processes and pedagogy was in place through Carpe Diem learning design (Salmon, Armellini, Alexander, & Korosec, 2019) and that the lecturers involved in Zenith were well briefed (Salmon & Wright, 2014).

The first Zenith pilot commenced in September 2016, with nine academic leads selected by October 2016. The pilot included around 2000 students from 1st to 4th years of undergraduate study and two study post graduate taught courses. The courses were 3 business subjects, 2 from Arts, 1 from Education, 1 from Engineering and 2 from Science.

Development of the structure and format of the LAs reports for students and somewhat different LAs reports for the academic leads were completed by December 2016 and developed further throughout the Pilot. The Zenith Pilot 1 commenced full operations with students at the beginning of Semester 1 2017. The Semester ran February to June 2017, with cycles of feedback and revised actions throughout.

Overview of the LAs visual reports

The Zenith reports for students were based on a series of learning analytic tools devised in consultation with the University’s partner, Blackboard, who provided support and consultancy. After consultation with the student body representatives, the Zenith student reports were designed to provide a visualisation of the most relevant and meaningful data, making it most interpretable and useful for students to action changes to learning strategies or to seek assistance (Ali, Asadi, Gašević, Jovanović, & Hatala, 2013; Papamitsiou & Economides, 2015).

Data was drawn from several data points and integrated with the systems already existing in the university including the Student Information System and the Learning Management System/Virtual Learning Environment (LMS/VLE), Blackboard Learn. No demographic or personal data was deployed. The data captured the students’ activity online including accesses, time spent in relevant learning environment in the LMS/VLE, page clicks and views, students’ own contributions and interactions, and was also linked to the Grade Centre, to integrate marks and grades. Blackboard’s own “Analytics for Learn” add-on was deployed for the collection and collation of data and presentation of reports

The data was curated into a meaningful and approachable visual representation that was interpretable by students. Zenith output for students consisted of four visual reports that were generated in near time. The reports were updated nightly every 24 hours and were updated and presented visually every 24 hours throughout the 12-week Semester.

The reports included materials on the LMS/VLE that were most popular, accessed and used by the student cohort, Things to Do Next, Communication and Collaboration, Total Activity Compared to the Cohort Average, and Performance. Each report was completely personalised for an individual student and included accesses, time spent in the digital environment, their online submissions, contributions such as discussion postings, which were then compared to the cohort average as well as the top 25% average of the cohort.

The Student Zenith Reports also included linked to the websites of UWA’s Student Support Services, located on campus and called Student Assist, and STUDYSmarter. Student Assist is run by the Student Guild and offers professionally trained teams to help with any academic, welfare or financial issues. STUDYSmarter provides a service to UWA students to support their study, communication, maths, English language, writing and research skills.

Zenith reports were protected by restricting access to authenticated users, with a middleware authentication system. All data was on secure connections and time stamped with a key lock. The Blackboard Analytics for Learn system was hosted externally by Blackboard but separately from the University’s regular digital environment.

Recruiting Participants and methods of feedback

Communication about the Zenith Pilot project was initially made through the Associate Deans Teaching and Learning. Academic leads were invited to apply from across all faculties. Academic leads were self-elected and then filtered according to the criteria. There were selection criterion that included at least 50 students in a course cohort (many had 200+), an interested academic leader, and an attempt to cover courses from all the faculties in the university, which was successful.

Rapport with the Zenith project team was established with the academic leads in late 2016. Throughout the semester, participating academic leads were emailed a series of online surveys and focus groups.

Recruitment for student evaluation was originally undertaken through a variety of ways including the announcement function on the VLE/LMS, information on the Zenith support web site, academic leads communicating with the students and the researcher and research assistant going into the face-to-face classes inviting the students to participate in the evaluation. A short video was made in which the researcher and research assistant presented a summary of the Zenith pilot and evaluation and a call for student participation for the evaluation and was made available to students and academic leads.

The Zenith project team were included in the evaluation as a means to capture the lessons learned in an ongoing manner. This team was a made up of members from different services within UWA. Team members comprised of staff from the Centre for Education Futures, STUDYSmarter, Student Assist, Library Client Support Officers, and a student Education Council representative. The project team was made up of a series of smaller teams, including the Capability and Capacity Team (responsible for resources and learning design support), the Digital Environment Team (responsible for technical support), and the Research Team (responsible for the evaluation and assisting with resources).

Evaluation

The evaluation stream of Zenith was placed in the context a traditional institution undergoing a major change towards the future of education. Evaluation for action offering frequent actionable feedback to the team to make rapid beneficial changes in processes as appropriate (Maruyama, 1996; Watkins, Nicolaides, & Marsick, 2016).

The action evaluation design was embedded in the overall Zenith project approach. Everyone involved on the core and wider Zenith project team was considered a stakeholder and respondent. Mixed methods captured the iterative process of integrating the use of the first LAs tool at UWA. In practice, evaluation results were fed back to the Zenith pilot team in a continuous channel throughout the project, supporting an adaptive approach for the project, and constructively informing other streams of work for the project, especially technical and academic.

Focus groups were used to collect qualitative data, and to identify emerging themes due to the exploratory nature of the study. These were largely free flowing and “grounded” data on which we comment below (Holton & Walsh, 2016). There were instances where half a dozen students wrote to the Zenith evaluation team stating they wanted to participate in the focus groups however were unable to make the scheduled time. In these instances, the Research Team arranged for a semi-structured interview with the students.

At the beginning of the semester, the Research Team struggled with recruiting student participants for the evaluation, due to students’ competing priorities. 3 members of the Zenith team then attended lectures and tutorials, to encourage participation, give out Expression of Interests forms, and offer a brief overviews on the Zenith reports. These actions resulted in somewhat increased participation in surveys and attendance at focus groups and interviews. One researcher also wrote up her notes in ethnographic style and provided regular summary insights to inform the Zenith Team.

Two online surveys were deployed for students during the semester. The purpose of the online surveys was to capture the usability of the Zenith reports, to assess which aspects of reports appeared provided the most useful data to foster student motivation and self-efficacy.

Student Support Services Data and Metadata Meetings were set up with key stakeholders across the University, These included STUDYSmarter, Student Assist, and the manager of the Client Support Officers located in the University’s libraries. Discussions included methods of collecting data which recorded students using their services as a result of the Zenith report. These student support services sent through their data to the Zenith team every three weeks. The metadata captured student access to the Zenith report on a weekly basis and was provided by the Digital Environment Team. In total there were seven data points from which the data was continuously collected. The collated data was presented to the Zenith Project Team at fortnightly meetings. The just-in-time approach reflects the values of rapid feedback and permitted the Zenith Project Team to take immediate actions during the semester wherever needed and appropriate.

A series of tools were adopted to reinforce the validity and reliability of the data. These included self-reflective journals: allowing for reflexivity to occur, acknowledging any biases and values held by the Research Team individually; Peer debriefing: Working in a team permitted everyone to reflect on ideas and discuss biases further; Audit trail: an audit trail was kept by members of the Research Team, where every step, thoughts and ideas were recorded. Keeping an audit trail assisted when having to respond to challenges and queries emerging throughout data collection; and Triangulation: triangulation was reinforced by using multiple data collection methods. It also assisted with cross-checking the data with other sources such as other participants and with student support services staff.

Surveys

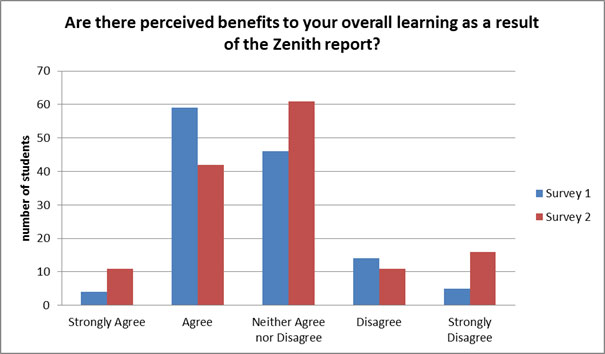

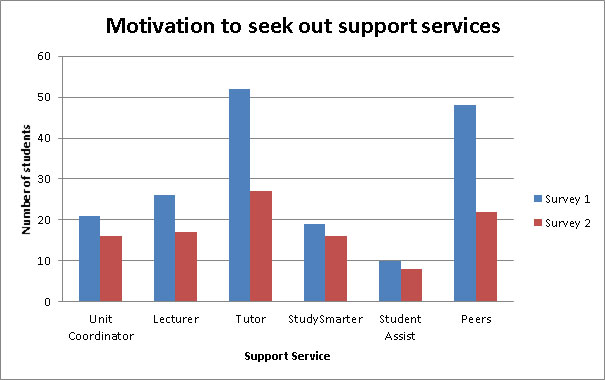

We report here some sample results from the surveys, which clearly demonstrate a wide variety of responses, with a small bias towards agreeing “benefits” to their learning, and a little tailing off consideration of benefits towards the end of the semester by some. However, it seemed the reports did motivate students to seek early help with their studies. The data shows that Zenith students made better use of local campus -based study support services and/or sought help from academics or peers. These responses by students was supported by other data as we report later – and could perhaps be considered the start of self-efficacy.

Figure 1. Perceived benefits self-reported by Zenith students

Figure 2. Zenith student surveys – Motivation to seek out support services as result of Zenith report

The study data was themed against the various objectives of the Zenith project. As previously noted, we report here the results of the investigation into students. The findings include some evidence of self-efficacy to support learners in their studies, prompted by their engagement with their own Zenith reports. And we note other emerging relevant themes relevant to the learners’ experiences.

Students and Engagement

The Zenith team put effort in promoting a wide variety of messages and channels about the availability, use and purpose of the Zenith reports for students. However, the team agreed that messages through the academic leads on the students’ courses were likely to be the most acceptable and effective. The academic lead/lecturer’s role was considered vital in achieving student engagement. The Zenith academic leads were requested to introduce Zenith to their students in week one of the semester and include a brief summary of the Zenith pilot in the written learning outline.

Nevertheless, during the first round of student focus groups early in the semester, it became rapidly apparent that there was a major lack in understanding of the potential benefits, purpose and functionality of the Zenith reports. Typical responses were:

“…it’s unclear to me what the system is supposed to achieve.” (Zenith student)

“Beyond seeing the habits of high performing students I’ve not seen any other importance for the report and in turn I’ve not been a regular user. This could possibly be due to my lack of understanding of its overall function alongside it being my first semester…” (Zenith student)

One academic staff member reflected on the students’ lack of awareness of the Zenith pilot, suggesting the timing of implementation was overshadowed by the commencement of the semester and many competing pressures, on both students and staff. Typical staff comments were:

“For me you’re approaching me in first week where everything else is saying look at me, do this, do that, and you’re saying it’s up to you whether you do this, well only the very highly motivated students will do it, but in other years it will probably be a bigger take up” (Zenith module/unit leader)

“…how clueless are we about it, so what am I going to tell them, you’ve got this Zenith thing, and it’s a thing, so click on it, and check it out’, they haven’t had someone sit them down, and take fifteen minutes to work through it, and they haven’t presumably seen the YouTube video, so how would they know?” Zenith academic)

“I don’t know if they (students) don’t understand, I’ve never demonstrated anything in class, I’m just thinking whether I need to do that or not” (Zenith unit/module leader)

This uncertainty amongst the staff permeated through to the students. One student from a focus group shared their lack of understanding and engagement

“I don’t know what expectation was placed on the lecturers or the people presenting the information to the participating groups, because I don’t believe it was very clear to our lecturer actually how this whole thing was going to roll [sic] out and how it was going to be beneficial or integrated with what’s going on within that unit and the participants in it.” (Zenith student)

For the students in the first focus group it was apparent how little they knew about the Zenith reports. Students had varying degrees of understanding. Two students felt that the communication from their lecturer was very minimal – “it was a mere mention that the reports exist”. These students had not accessed their reports and were not sure how the reports could be utilized.

Another student had a strong understanding of the purpose of the reports and was already using them for their intended purpose of self-reflecting on the learning process. This student’s lecturer had been enthusiastic about the reports, communicating about them and encouraging students to use the reports at the beginning of semester.

The Zenith reports were accessed directly from the VLE/ LMS menu. However, all other information on how to access, how to interpret the reports, and surveys for evaluation were on a separate “Support” site – for practical and technical reasons. This proved to be a problem and certainly contributed to the lack of information knowledge for students on how to interpret the Zenith reports:

“The stuff down the bottom for me on LMS, for me – the important stuff I need to know is up here. So I think that’s probably just a positioning thing, if it was actually in the unit <module> that you’re ‘testing’ then I would’ve probably gone into it…” (Zenith student)

Upon reflection we found that for the students to understand the potentiality and usability of the reports, much increased communication and support must be given to enable them to interpret and use the visual information:

“We didn’t have any assessments when she [referring to Academic teacher] did talk about it so we couldn’t really see what we had done, but we did look at how our accesses were different to other people, which was pretty cool” (Zenith student)

The feedback from students gave the Zenith project team insight into the required actions to increase engagement with students. The team set up Zenith refreshers for the academic leads, increased notification via LMS/VLE announcement board and created a routine of in-class visits from the project’s Research Team. And it’s likely that for the students’ the haze of the first few weeks of semester may have cleared a little so the renewed and refreshed communication seemed more relevant. The communication proved vital to increasing the awareness around the reports’ functionality and for students to begin to explore how the reports may serve them. The second round of interviews and surveys with the students illustrated an increase in the use of the reports as well as a deepening of the understanding on how to critically reflect and action data gathered from the LAs.

While it did take longer than anticipated for students and academic leads to become accustomed to the Zenith reports, ultimately the later evaluation revealed that some students were starting to use the reports for supporting their academic self-efficacy, including critical reflection on study techniques and progress.

“It has been of assistance to me to see an up-to-date rank on my scores compared with my cohort’s. It has let me gauge whether the work I am doing is appropriate to the grades I would like to receive in this unit. The visualization of my engagement with online learning compared to my cohort has been interesting, especially when it has notified me that my learning is a week or two behind other people”. (Zenith module/unit academic leader)

LAs and transitions

Our findings also illuminated the importance of appreciating and positioning students´ responses to LA within their personal and educational experiences – leading to new insights in what they appeared to need to support their learning journeys, especially from the perspective of growing maturity in learning approaches. The Zenith respondents represented students in transition in a variety of ways. Some were in their first higher education experience following high-school; some were undertaking online or blended learning for the first time; some were arrived in Australia for their study for the first time; others had returned to university from study breaks, overseas study or work.

As our methods within the evaluation, were largely participative, we did not seek to determine quantitative or demographic data on these differing transition categories. However from students’ own voices, we note that the previous experiences, context and moment in time when they encountered the opportunities offered by Zenith, clearly impacted on the reports’ acceptability and usability for them.

For example, some students reported their keenest interest in the comparative graph portraying their grade point average in relation to their peers. In other words, of all the presentation of data, these students were most interested in their marks. We noted that most of the students were those who were ´straight from school and in their first semester at university. Nevertheless, for some of these students the Zenith visual reports provided them with a way to initiate their self-reflection and to self-observe their engagement and progression in the learning experience:

“A way to gauge my performance against that of my peers and a way to evaluate and reflect upon my engagement with the unit”. (Zenith student)

“The Zenith report to me is a report of reflection. It shows the areas where you may be lacking and encourages me to improve and strive for improvement.” (Zenith student)

Academic staff confirmed the focus on marks, particularly for “school leavers” in transition to their first ever experience of university. Academics noted that for these students their recent experiences of secondary school, lay the foundation as to how they regulated their time, managed their efforts, and approached their grades. This transition effect may be particularly marked at UWA where many students have had pressure or coaching to achieve the high grades for entry.

“… that’s how they equate it, time equals marks, and that’s the main argument, I spent three hours on this, and they only spent an hour and I got less marks than them…that’s a classic argument you get in first semester, first year.” (Zenith academic)

“…it sounds a little bit like they ought to be self-sufficient in a way, but at the same time…for me it’s first semester first year, and I can tell an awful lot of them have been used to querying everything in school and getting higher marks because they’ve queried it, and that’s a learnt behaviour, and it’s a learned behaviour that they can email you and ask you for an extension…” (Zenith module leader)

The findings also revealed that slighter more mature (25+) students appeared to be less likely to use the Zenith reports, possibly due to their alternative motivating factors for studying at university. We noted that mature-aged students were markedly less concerned with the comparative grade graph compared to the school leavers.

“I think the idea of Zenith is good, I personally don’t need a lot of motivating so for me it’s not going to prompt me anymore. It’s mildly interesting to see where I’m sitting compared to other people, but that’s not my motivation…but I think it might really suit other people and if it’s working really well, it’s good for younger students who might need to realize they either need to work harder and apply themselves more if they’re not getting the marks they want…” (Zenith student)

Another mature-aged student gave further insights into the differing motivating factors, emphasizing alternative priorities and self-awareness:

“…I think I would use it more when I was younger – if I can actually touch on that. Whereas now, because I am self-motivated, I don’t really care what other people are doing. Cause I’m here to do my objectives, to meet my goals. Whereas before I think it was more peer…what everybody else is doing, like you know when you’re coming out of high school, what is everybody else doing, where am I sitting. It would have been a lot more, not useful, but I would’ve compared myself a lot more” (Zenith student)

LAs for effort and self-regulation

Another strong theme was that Zenith appeared to offer a new tool to foster self-regulated learning in a variety of ways, including time management:

“...So I can see that my accesses are high on some things but I’m not getting as much as some of the others who have lower accesses, so that sort of told me to manage my time well and actually look at the information properly as opposed to coming back to it all the time.” (Zenith student)

The Zenith reports also assisted with effort management to achieving the grades desired:

“It really helps you to realize the point of where you should be, especially at the beginning of the unit and then you can sort of build off of that. So I have an assessment coming up tomorrow and it sort of helps me to determine how much effort I should be putting in to get the average and then how much more effort I should put in to get the higher percentages.” (Zenith student)

There were frequent and persistent reports of motivation and confidence boosting

“...Allows me to monitor my learning and gauge my progress of the course in a way that motivates me to ensure I am committing to my studies.” (Zenith student)

“I have loved having access to the Zenith report as I feel it is a fantastic motivating method and also an indication of the level of achievement I have accomplished which helps boost my confidence.” (Zenith student)

There was not universal agreement from students on this theme

“The Zenith report has not assisted with my studies; the information it gives regarding LMS/VLE use is irrelevant. If you have done all the relevant readings and submitted any online assessments, as well as looking at the power points uploaded to the LMS to fill in any gaps in your notes, that’s what matters, not how many times you have logged in to view the unit… Zenith therefore does not give information that is relevant to helping a student improve.” (Zenith student)

LAs enhancing “The Third Space”

“It has almost made a third player in the teacher-student dynamic, thereby allowing them to see their own level of engagement (measured by clicks) as separate from themselves and not assessed or produced by me as UC [unit-coordinator- i.e. their academic teacher]. So an ancillary measure that allows reflection for the students” (Zenith academic leader)

Through the analysis of the data and reflections on the Zenith pilot, the researchers came to conceptualize LAs as a metaphoric window positioned between the learner and their digital online space and experience. The window facilitates the learner ability to see into their activities, contribution, participation and engagement in the online learning environment. The online learning space feeds its responses to the reflective window space, helping to better connect those all involved and significant in the learning process. In this sense, the “third space” (Schuck et al, 2017; Pane, 2016) that LAs create, makes the online learning component of a digital learning environment and experience more likely to promote critical reflection on their learning behaviours and strategies. In other words, rather than perceiving LA as a by-product of the learning process, it can be viewed third space between learner, other human supporters especially the academic teacher.

The discussion on third players and third spaces emerged from the evaluation rather than being set up as a hypothesis to test. Therefore they remain little more than an idea for interpretation at this stage. One of the authors recalls this ideas emerging in the early days in grounded modelling of online culture (Prinsloo, Slade, & Galpin, 2012). However we report our interest here, as the practice of LA practice matures “third environments” may prove to be a valuable.

Third Space is a postcolonial socio-cultural theory coined by Professor Bhabha, (2012) It challenges notions of culture as being singular and unitary and confronts simplistic “us vs them” discourses. The theory sees culture as being inherently plural and hybrid, disrupting assertions of there being pure and original cultural forms, which then disturb and question relationships (Bellocchi, 2009). Bhabha calls on notions of third space that “makes the structure of meaning and reference an ambivalent process, [and] destroys... [the] mirror of representation in which cultural knowledge is customarily revealed as an integrated, open, expanding code” (Bhabha, 1994; p.54). In other words, culture, in its multiple forms, types, continuities and changes is in this idea of a third space – a non-tangible, open and constantly transforming space. Another sociologist, Ray Oldenburg first utilized the notion of third place – this is about a physical place which is separate to the main ones people occupy, such as home and work. The key elements to what makes a third place are; it is a place which is easily accessible and is available when you want it; it is a neutral place, there is no obligation to be there; there are regulars, those that occupy the place and become familiar people that you can collaborate with (Woodside, 2016). Some research that looks at online spaces, such as massive multiplayer gaming and social media as third places (Edirisinghe, Nakatsu, Cheok, & Widodo, 2011; Steinkuehler & Williams, 2006).

The Zenith team found the LMS/VLE technology platform provided a digital place for the students to enter into, to navigate and utilize the tools within that platform and connect and collaborate with the other students and their academic teachers. We liken the LAs to the third space – capturing the interactivities of the place and mirroring such information to the “occupants”. How they interpret the reflection of their online activity or what they choose to do with the information is open to them and has many possibilities. The third space is reflective, it is open and adaptable to the individual’s needs and interpretations.

Summary Findings from Aims

- We sought to establish how the LAs visual reports might introduce more self-efficacy in the University’s students. The best we can report that there were encouraging signs of the potential and huge “lessons” learned to improve the volume and scalability in the direction of promoting self-efficacy. Some students clearly engaged and were interested in visual reports of personal information that they had not encountered before and we noted some enthusiasm in some that could be considered to be contributing to motivation.

- We sought to explore how the LAs reports might function as additional and fast feedback to students, which we conceived as a form of “electronic mentoring”. The biggest notable and provable outcome was that the rate of students seeking help with their academic work in a timely managed was dramatically increased. We considered that they were indeed treating the reports as fast feedback on their studies and were willing to take appropriate action to seek development for themselves.

Emergent findings

Zenith was complex and ambitious. The outcomes reported here are one slice through a wide variety of data and experiences from its pilot. There is no doubt that LAs are part of a complex adaptive ecosystem of learning. Therefore, in addition to investigating and reporting on our evaluation intent, we feel it is valuable also to note some emergent outcomes from the Zenith study.

LAs need to be understood within the contexts in which they are utilized. In other words students’ engagement and experience differs depending on their previous experiences and in different disciplines and levels of higher education. In short, different learner needs, both articulated and more subtle, result in various responses to the LAs.

The early and ongoing communication with all stakeholders proved essential for the viability and acceptability of the Zenith project. Similarly the constant challenge and engagement from the students’ representatives proved truly invaluable. The availability and benefits of LAs need to be often communicated to encourage students to use them. We established that LAs will be embraced, “liked” or not, depending on how the reports can support the individuals’ needs, wants and ways of going about their learning. The use of data for self-information for learning online is clearly still in its infancy. It is also highly context dependent, particularly for the less mature learner. For example, if the tool has not been fully embedded into the learning design of the learning module, and/or the use of the reports as well as development on how to interpret them have not been encouraged, the usefulness of LAs will not be realized by students. In other words, LAs are not (yet) a “natural” approach for university students.

The Zenith study contributed to raising the awareness and the understanding of LAs as an important teaching and learning tool (Wise, 2019). It has many potentials including assisting students with self-directing their learning. The study did not “prove” that we enhanced self-efficacy, perhaps a much longer process would be needed, but many of the responses and outcomes offers clues to somewhat different students’ behaviour. It became clear to us that for Zenith LAs to promote self-efficacy, that students would need to acquire the ability to mine and recognize “actionable insights” – in other words not only to receive the data, but to be self-aware enough and have the capability to reflect and act upon it. (Jørnø & Gynther 2018; Wise, 2014).

For this awareness and connection to be realised and utilised, students and their academic teachers need to recognise the potentiality and usability of the LAs (Jørnø & Gynther, 2018). Zenith revealed that it was not enough to simply provide the reports; like Klein et al (2019), we found ongoing support and communication as to how to use and interpret them was required. We noted that a serious challenge is that many learners, especially those in transition from school or university, do not have a clear perception of where to aim their learning. Feedback from the academic teacher may be infrequent and retrospective – typically on assignments, and of course may reflect an emphasis on “writing for the exam” rather than a holistic and contextualised view of learning progress (Ritsos & Roberts, 2014).

The Zenith researchers noted that appropriate use of LAs to promote student learning and support at key points, has the potential to strengthen networks within the university. For example, even if the use of a LAs report did not resonate for a certain student for self-reflection purposes, they did assist in alerting them to deploy support services. The committed owners of these services often lament that students come late to their help if at all. Hence academic support services are used insufficiently by students or too late to be of value. Zenith noted a much earlier increase in use of campus-based services. It is possible to imagine that a similar process may be found with other student support services – for which we were unable to make a direct test.

Zenith found that it is important to educate students about the role of privacy of the data being used for LAs. Students should understand that the LAs data and information is theirs, and the university is revealing it to them in a frequent and visual form so that they can track their learning. Most students who responded to the Zenith evaluation understood this proposition, i.e. they were satisfied that university was avoiding a “Big Brother” approach, and instead were providing potential assistance.

One of the early concerns from the academic module leaders was that the LAs might in practice result in unhealthy competition and increased stress for the students (Tan et al., 2017). Or that they would be demotivated by identifying that their position in class was lower than others. In practice there was no evidence in our limited study that any of this was happening. Whilst we encouraged the use of the Zenith reports we did not “push” or “require” students at any time to take part. Everything was purely voluntary and unpressured. Like Sclater, (2017) we still have the questions unanswered that why and in what way some students are motivated by metrics, and others may be concerned. Even that other students could decide to put in less effort and time to “get by” in the event they were proving “average”. Any way, we conclude that some self-efficacy was in play and decisions being taken.

There was considerable evidence that students were unaware of the kind of often extensive design and pedagogical involved in their digital modules, frequently seeing the face-to-face activities as the “course”. Some students did demonstrate the metacognitive and self-efficacy skills require to benefit, others simply did not.

From this large pilot our overall conclusion is that all of us involved in the development and research around student analytics must build confidence in our learners that the data they are receiving is accurate and meaningful, appropriately and ethically presented, and offer the maximum assistance to deploy it on their journey towards self-efficacy and responsibility for their own learning.

Outcomes

Zenith gave ongoing – day to day-week to week – insights to a whole R & D team, (which included library, study support, technical helpdesk, governance as well as the students, academics and researchers) and changed their views of the potential of intervention projects, the need to design for purpose and be flexible in terms of outcomes and learnings.

At the time of writing and reflecting on this paper, we acknowledge there are still many challenges (see for example Archer and Prinsloo, 2019). The issues of ethics continues to be researched and debated, and good practice offered see for example (Kitto & Knight, 2019; Slade & Tait, 2019).

However, Zenith discovered that LAs, once set up within a contextualized, ethical supportive project could provide students with new ways of getting insights into their learning experiences, in a-unobtrusive, easily-accessed way.

The Zenith Research team philosophy arose from a desire to enhance the students’ experience – not unusual of course – rather than a “technical” or “fascination with the data” perspective. The whole team noted how complex educational systems are and hence how unpredictable it is to add yet another dimension – in this case the LAs, the Zenith students, academics. This is not a simple and straightforward means to an end; rather it is a part of the educational process. LAs have the potential to enhance learning and experience through perhaps promoting self-reflective and self-efficacy practice and by drawing attention to students’ effort and achievements. As such, they are part of the bigger picture of learning, rather than “an outcome”. We believe these conclusions are important points for all educational R & D projects that involve innovations and complex systems.

Limitations of the Study

This paper is based on a student-centred “slice” through the experience and the data. The Zenith pilot took place in the context of a wide scale of changes in education provision at UWA. As such Zenith was competing for attention for students and staff engagement. As we have noted, in the first few weeks there was low student engagements with the Zenith reports and the early focus groups and surveys were limited. These were mitigated as the semester went on by the project team’s ability to solve technical problems and improve communication to the students, leading to valuable ethnographic understandings reported here.

We do not claim, nor was it our aim, to point quantifiable “changes” in the students that we engaged. Instead to illuminate and raise the context in which transformative approaches to technology and data can be considered in the context of higher education in the future.

Further work

There was some small evidence that some students were attempting to “quantify” themselves, and were recognizing the value of benefitting their learning through self-awareness, to good effect on their educational success (Sclater, 2017; p.127). Some evidence was found in the observation data – for example that the visualization was drawing some attention to the positioning of personal activity in the wider cohort, that those students who looked regularly at Zenith data were seeing the reports as a “nudge” (Dimitrova, Mitrovic, Piotrkowicz, Lau, & Weerasinghe, 2017), and that the learners felt part of a wider community tackling the same issues. However, we were unable to prove that personal behaviour was changing; we suggest because much more time would be needed- learning is a long process.

Further, we own up to the criticisms of Jørnø and Gynther (2018) that in this, the first Zenith pilot, that insufficient attention was given to feedback loops and supporting students’ abilities to act on insights. In this pilot, however, it was untestable or provable, and is certainly a further research question for future student focused and student-directed LA research and development, most certainly through a more longitudinal and process focused study focused on students’ progress, with a stronger link between self-awareness and reflection (Tan et al., 2018) and students’ views of the overall impact on their learning.

We identified some insights that the context and personal learning experiences that students brought to their learning impacting directly on their experiences and ability to benefit from, make sense of and respond to the opportunities of LAS. (See for example Tan et al., 2017). It was outside our scope to explore these in any detail but would seek to ensure that the personal and context-sensitive characteristics of learners are addressed in future research.

Practical Recommendations

Students and their representatives need to be considered a critical stakeholder group in project planning and action research as we build upon the benefits of LAs. Considerable effort needs to be put into early and frequent communication to the students about the introduction of LAs and sustained throughout the semester. If academic staff are using LAs for the first time, it is beneficial not only support them with communication materials, workshops and video, but also offer to directly brief students.

The LAs reports need to be super clear and visually appealing, and easily accessible within the VLE/LMS. Students need support in understanding how LAs may help them – in short how to be self-reflective and promote their self-efficacy. The benefits of the LA reports may need to be targeted towards different cohorts and benefits.

References

- Ali, L., Asadi, M., Gašević, D., Jovanović, J., & Hatala, M. (2013). Factors influencing beliefs for adoption of a learning analytics tool: An empirical study. Computers & Education, 62, 130–148.

- Archambault, L., Wetzel, K., Foulger, T. S., & Kim Williams, M. (2010). Professional Development 2.0. Journal of Digital Learning in Teacher Education, 27(1), 4–11. https://doi.org/10.1080/21532974.2010.10784651

- Archer, E., & Prinsloo, P. (2019) Speaking the unspoken in learning analytics: troubling the defaults. Assessment & Evaluation in Higher Education. doi: 10.1080/02602938.2019.1694863

- Bandura, A. (1977). Self-efficacy: toward a unifying theory of behavioral change. Psychological Review, 84(2), 191.

- Beetham, H., & Sharpe, R. (2013). Rethinking pedagogy for a digital age: Designing for 21st century learning. Routledge.

- Bellocchi, A. (2009). Learning in the third space: A sociocultural perspective on learning with analogies. Queensland University of Technology.

- Bhabha, H. (1994). The Location of Culture (2nd ed.). Routledge.

- Bhabha, H. K. (2012). The location of culture. Routledge.

- Broadbent, J. (2016). Academic success is about self-efficacy rather than frequency of use of the learning management system. Australasian Journal of Educational Technology, 32(4).

- Broadbent, J., & Poon, W. L. (2015). Self-regulated learning strategies & academic achievement in online higher education learning environments: A systematic review. The Internet and Higher Education, 27, 1–13.

- Butler, D. L., & Winne, P. H. (1995). Feedback and self-regulated learning: A theoretical synthesis. Review of Educational Research, 65(3), 245–281.

- Chatti, M. A., Dyckhoff, A. L., Schroeder, U., & Thüs, H. (2012). A reference model for learning analytics. International Journal of Technology Enhanced Learning, 4(5–6), 318–331.

- Costa, C. (2018). Digital scholars: A feeling for the academic game. In Feeling Academic in the Neoliberal University (pp. 345–368). Springer.

- Daniel, B. K. (2017). Big Data and data science: A critical review of issues for educational research. British Journal of Educational Technology, 00(00). https://doi.org/10.1111/bjet.12595

- De Laat, M., & Prinsen, F. (2014). Social learning analytics: Navigating the changing settings of higher education. RPA, 9(Winter), 51–60. https://doi.org/10.1017/CBO9781107415324.004

- Dimitrova, V., Mitrovic, A., Piotrkowicz, A., Lau, L., & Weerasinghe, A. (2017). Using Learning Analytics to Devise Interactive Personalised Nudges for Active Video Watching. Proceedings of the 25th Conference on User Modeling, Adaptation and Personalization – UMAP ’17, 22–31. https://doi.org/10.1145/3079628.3079683

- Echeverria, V., Martinez-Maldonado, R., Granda, R., Chiluiza, K., Conati, C., & Shum, S. B. (2018). Driving data storytelling from learning design. Proceedings of the 8th International Conference on Learning Analytics and Knowledge - LAK ’18, 2018(March), 131–140. https://doi.org/10.1145/3170358.3170380

- Edirisinghe, C., Nakatsu, R., Cheok, A., & Widodo, J. (2011). Exploring the concept of third space within networked social media. Lecture Notes in Computer Science (Including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), 6972 LNCS, 399–402. https://doi.org/10.1007/978-3-642-24500-8_51

- Elias, S. M., & MacDonald, S. (2007). Using past performance, proxy efficacy, and academic self-efficacy to predict college performance. Journal of Applied Social Psychology, 37(11), 2518–2531.

- Holton, J. A., & Walsh, I. (2016) Classic Grounded Theory: Applications with Qualitative and Quantitative Data. Sage.

- Ifenthaler, D. (2017). Are higher education institutions prepared for learning analytics? TechTrends, 61(4), 366–371.

- JISC (2017). Learning analytics in higher education | Jisc. Retrieved April 6, 2018, from https://www.jisc.ac.uk/reports/learning-analytics-in-higher-education

- Kitto, K., & Knight, S. (2019). Practical ethics for building learning analytics. British Journal of Educational Technology, 50, 2855-2870. doi:10.1111/bjet.12868

- Kozinsky, S. (2017 July 24). How Generation Z is Shaping the Change in Education. Forbes [Blog post]. Retrieved April 5, 2018, from https://www.forbes.com/sites/sievakozinsky/2017/07/24/how-generation-z-is-shaping-the-change-in-education/#8eb07f565208

- Kruse, A., & Pongsajapan, R. (2012). Student-centered learning analytics. CNDLS Thought Papers, 1–9. https://doi.org/10.1007/978-1-4419-1428-6_173

- LAK (2011). 1st International Conference on Learning Analytics and Knowledge. Retrieved August 15, 2018, from https://tekri.athabascau.ca/analytics/

- Jayasuriya, L. (2006). Spirit of a Civic University. The New Critics, August 2006(2). Retrieved from http://www.ias.uwa.edu.au/new-critic/two/civicuniversity

- McNiff, J. (2013). Action research: Principles and practice. Routledge.

- Papamitsiou, Z., & Economides, A. A. (2015). Temporal learning analytics visualizations for increasing awareness during assessment. International Journal of Educational Technology in Higher Education, 12(3), 129–147.

- Prinsloo, P., Slade, S., & Galpin, F. (2012). Learning analytics: challenges, paradoxes and opportunities for mega open distance learning institutions. Proceedings of the 2nd International Conference on Learning Analytics and Knowledge – LAK ’12, 130–133. https://doi.org/10.1145/2330601.2330605

- Reigeluth, C. M. (2012). Instructional theory and technology for the new paradigm of education. RED: Revista de Educación a Distancia, (32), 1–18.

- Reigeluth, C. M., & Karnopp, J. R. (2013). Reinventing schools: It’s time to break the mold. R&L Education.

- Ritsos, P. D., & Roberts, J. C. (2014). Towards more visual analytics in learning analytics. In Proceedings of the 5th EuroVis Workshop on Visual Analytics (pp. 61–65).

- Ruggiero, D., & Mong, C. J. (2015). The teacher technology integration experience: Practice and reflection in the classroom. Journal of Information Technology Education, 14.

- Salmon, G. (2019). May the Fourth Be with You: Creating Education 4.0. Journal of Learning for Development, 6(1), 95-115.

- Salmon, G., & Wright, P. (2014). Transforming Future Teaching through ‘Carpe Diem’ Learning Design. Education Sciences, 4(1), 52–63. https://doi.org/10.3390/educsci4010052

- Salmon, G., Armellini, A., Alexander, S. & Korosec, M.

(2019). Paper presented to OEB19. November.

https://www.gillysalmon.com/uploads/5/0/1/3/50133443/carpe_diem_

for_transformation_paper_final_nov_2019_.pdf - Schunk, D. H. (1991). Self-efficacy and academic motivation. Educational Psychologist, 26(3–4), 207–231.

- Sclater, N. (2017). Learning analytics explained. Routledge.

- Sclater, N., Peasgood, A., & Mullan, J. (2016). Learning Analytics in Higher Education-A review of UK and international practice, (April).

- Siemens, G. (2013). Learning analytics: The emergence of a discipline. American Behavioral Scientist, 57(10), 1380–1400.

- Siemens, G., & Long, P. (2011). Penetrating the Fog: Analytics in Learning and Education. EDUCAUSE Review, 46, 30–32. https://doi.org/10.1145/2330601.2330605

- Slade, S., & Tait, A. (2019). Global Guidelines: Ethics in Learning Analytics. Retrieved December 3, 2019 from https://www.learntechlib.org/p/208251/

- Steinkuehler, C. A., & Williams, D. (2006). Where everybody knows your (screen) name: Online games as “third places.” Journal of Computer-Mediated Communication, 11(4), 885–909. https://doi.org/10.1111/j.1083-6101.2006.00300.x

- Stewart, C. (2017). Learning Analytics: Shifting from theory to practice. Journal on Empowering Teaching Excellence, 1(1), 10.

- Swain, H. (2018 February 7). Opportunity and risk: universities prepare for an uncertain future | Higher Education Network | The Guardian [Blog post]. Retrieved April 5, 2018, from https://www.theguardian.com/higher-education-network/2018/feb/07/opportunity-and-risk-universities-prepare-for-an-uncertain-future

- Tan, J. P.-L., Koh, E., Jonathan, C. R., & Yang, S. (2017). Learner Dashboards a Double-Edged Sword? Students’ Sense-Making of a Collaborative Critical Reading and Learning Analytics Environment for Fostering 21st Century Literacies. Journal of Learning Analytics, 4(1), 117–140.

- The University of Western Australia (n.d.). History of the University. Retrieved from http://www.web.uwa.edu.au/university/history

- Universities UK. (2015). Quality, equity, sustainability : the future of higher education regulation, 45.

- Usher, E. L., & Pajares, F. (2008). Self-efficacy for self-regulated learning: A validation study. Educational and Psychological Measurement, 68(3), 443–463.

- Woodside, H. (2016 March 15). Engagement in the ‘third space’ – Digital democracy, news, thinking, tips & tricks. Delib, Practical Democracy News [Blog post]. Retrieved June 14, 2018, from https://blog.delib.net/engagement-in-the-third-space/

- Zenith project : Education at UWA : The University of Western Australia. (n.d.). Retrieved June 14, 2018, from http://www.worldclasseducation.uwa.edu.au/education-futures/strategic-projects/zenith-project

- Zimmerman, B. J. (2000). Self-efficacy: An essential motive to learn. Contemporary Educational Psychology, 25(1), 82–91.

- Zimmerman, B. J. (2002). Becoming a self-regulated learner: An overview. Theory into Practice, 41(2), 64–70.

Acknowledgements and thanks

The authors would like to thank all the students who took part in the Zenith projects, and their student representatives. Also the nine members of academic staff and their teaching teams who committed to the innovation in their courses and engaged with development and learning design to make it possible. Also the numerous colleagues across the UWA campus who freely offered time and advice throughout the conduct of the project and its evaluation. Also staff from the support services who responded to the Zenith students and kept records for the Zenith team. And last but definitely not least the highly-dedicated insightful Zenith project team including the researchers.

Approval to conduct this research was provided by the University of Western Australia with reference number RA/4/1/8968, in accordance with its ethics review and approval procedures.